AI and Knowledge Workers: The Real Challenge Is Cognitive Drift

AI and knowledge workers are being pulled into the same bad debate again and again: either AI will replace most intelligent work, or it will make everyone dramatically more productive. Neither framing is quite right. The sharper issue is cognitive drift: the slow erosion of judgement, stopping rules, and thinking discipline at the exact moment higher-order human cognition becomes more valuable.

What matters most is not only what AI can do, but what repeated AI use does to how people work. It is getting easier to offload mapping, synthesis, and evaluation to models, easier to work past natural stopping points, and easier to confuse speed with intelligent action. That is a dangerous mix for knowledge workers, founders, and operators whose value depends on judgement rather than raw output alone.

This on-site edition preserves the core argument of the original article first published on Substack on March 9, 2026, while adapting it for IQMindware's AI and knowledge-work learning cluster.

Why AI is changing knowledge work

The most useful way to think about AI and knowledge work right now is not simple replacement panic and not naive productivity optimism. It is that AI is increasing the premium on human judgement, resilience, and systems thinking at the same time that it becomes easier to offload those capacities.

The World Economic Forum's Future of Jobs Report 2025 is explicit about what employers still value: analytical thinking, creative thinking, resilience, flexibility, agility, AI literacy, technological literacy, and systems thinking remain among the most important capabilities in the market. In other words, the economy still prizes higher-order human cognition even as AI adoption accelerates.

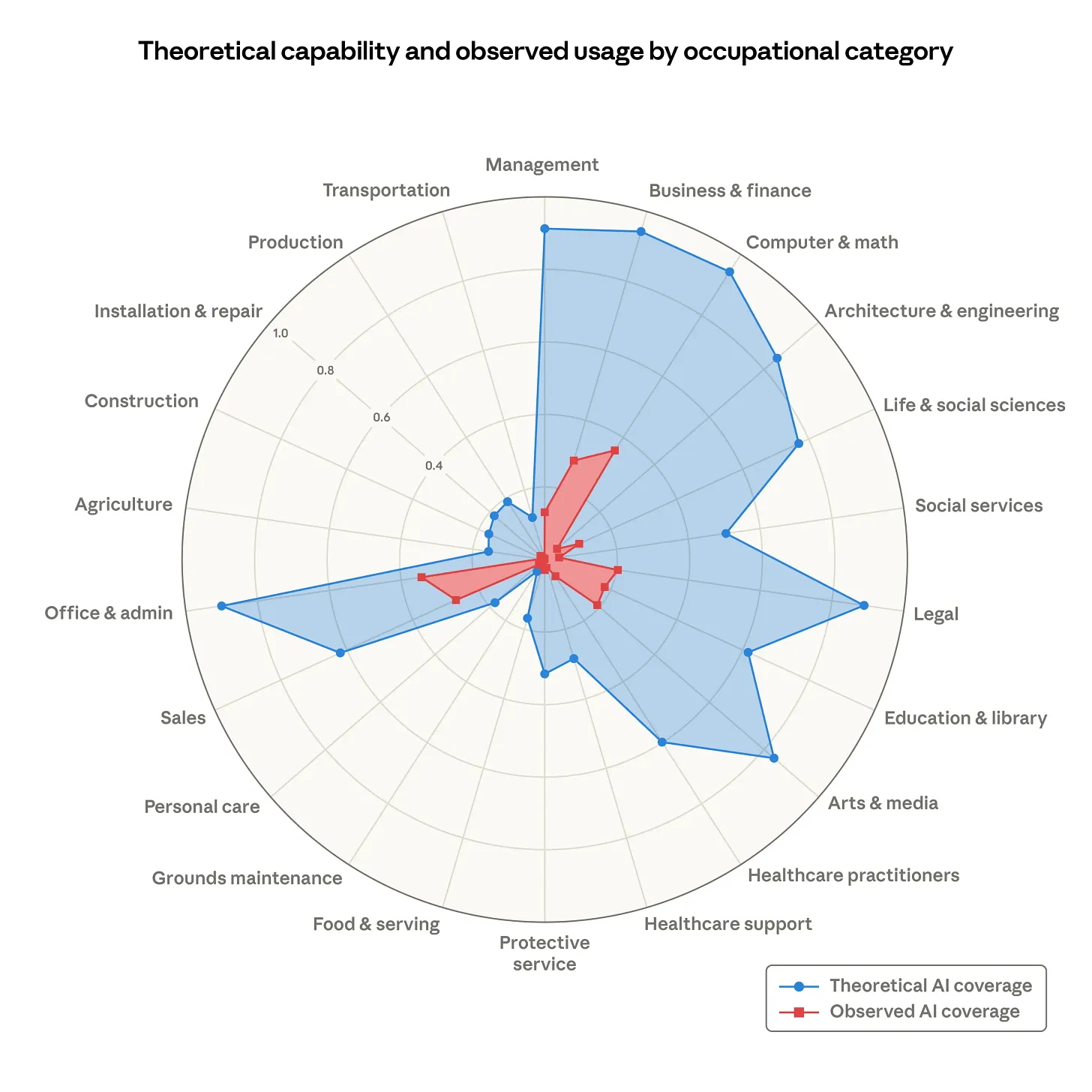

At the same time, Anthropic's report, Labor market impacts of AI: A new measure and early evidence, points to a more layered reality than simple automation headlines. Their March 5, 2026 analysis shows meaningful AI exposure in a number of knowledge-work occupations, but not a broad unemployment shock in those roles. What does show up is early hiring friction, especially for younger workers entering more exposed fields. That matters because the pressure may appear first as thinner ladders, slower entry, and role redesign before it appears as obvious mass displacement.

That is the first pressure point for knowledge workers. The work is not disappearing in one clean step. The shape of intelligent work is changing, and the people who thrive in that shift are likely to be those who can still frame problems, prioritize under ambiguity, and hold quality under load.

AI burnout and workload intensification

The second pressure point is burnout. The promise of AI is that faster work should mean lighter work. In practice, it often means faster cycles, more parallel demands, and fewer natural stopping points.

The Harvard Business Review article "AI Doesn't Reduce Work-It Intensifies It" makes the point clearly: AI often does not reduce work so much as intensify it. Tasks get completed faster, but expectations rise with them. The new standard becomes not what is sustainable, but what is now technically possible. Throughput increases, but so does cognitive fatigue.

This is not a side issue. For knowledge workers, burnout pressure directly degrades judgement quality. When there are no clean stopping rules, people drift into always-on mode, constant context switching, and shallow decision loops. The work looks efficient on the surface while becoming less intelligent underneath.

Cognitive offloading and critical thinking

The third pressure point is cognitive offloading. Humans have always offloaded memory and routine tasks, but generative AI makes it possible to offload more of the thinking process itself. That is where cognitive drift becomes a real strategic risk.

Michael Gerlich's 2025 mixed-method study, AI Tools in Society: Impacts on Cognitive Offloading and the Future of Critical Thinking, reported a negative association between heavier AI tool use and critical thinking, with cognitive offloading acting as a mediator. That does not settle the whole question forever, but it is a serious warning sign. If people repeatedly hand over discrimination, evaluation, synthesis, and first-pass mapping to the model, they may weaken the very habits employers still claim to need most.

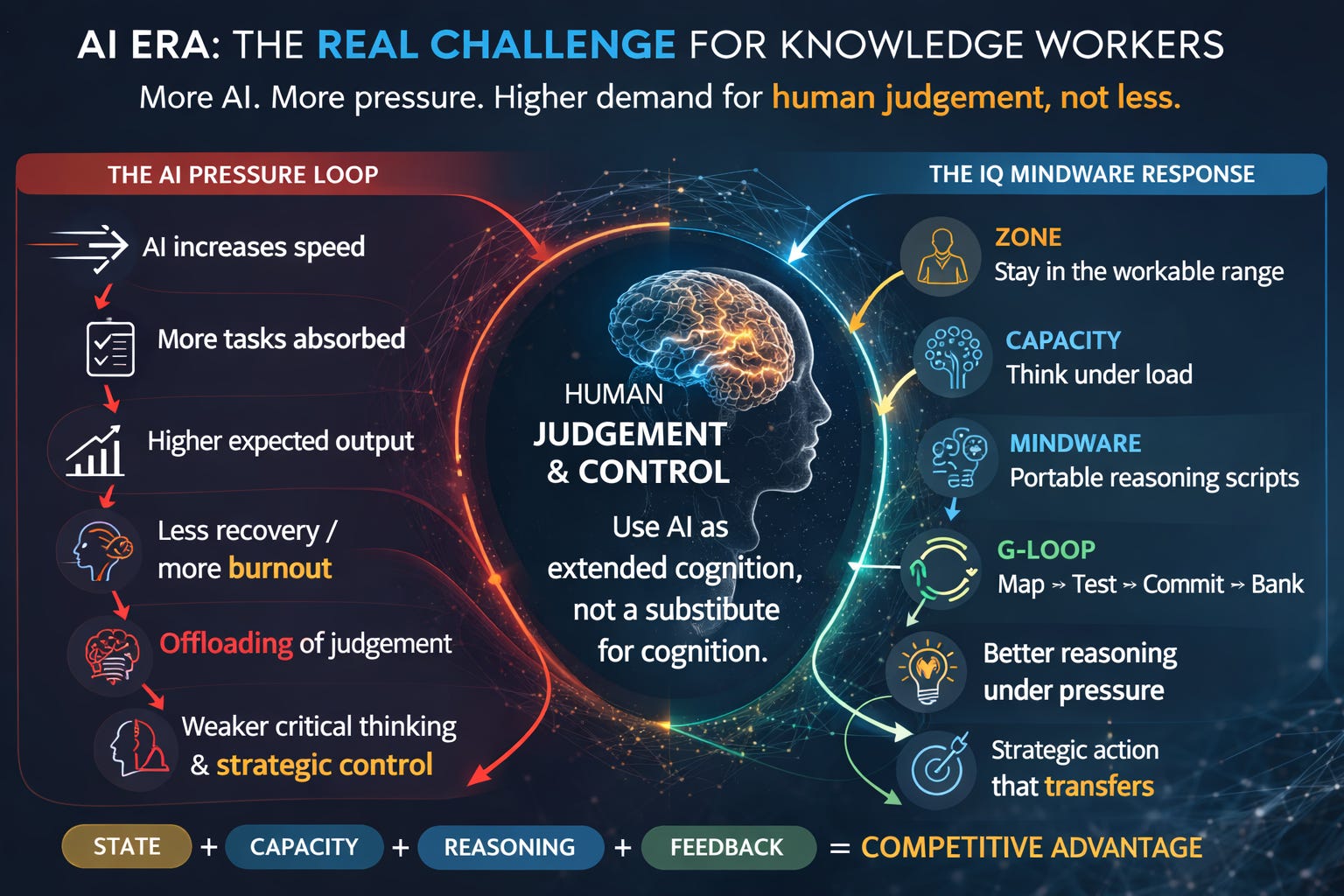

This is why cognitive drift is a better frame than simple productivity. The issue is not whether AI can help. It clearly can. The issue is whether repeated reliance turns AI into extended cognition, or into a substitute for cognition. IQMindware's existing pieces on the ORSAR Framework and the Human-Led AI Reasoning Protocol are practical responses to that same risk: keep the human upstream of framing, stress-testing, and final judgement.

Why the bottleneck is moving from syntax to systems

The entrepreneurial angle matters just as much. AI appears to be lowering the cost of prototyping and software production, but that does not make judgement less important. It shifts the bottleneck upward.

OpenAI's February 2, 2026 post, Introducing the Codex app, is revealing here. The framing is no longer "write every line yourself." It is increasingly about supervising coordinated agents across design, build, shipping, and maintenance. That means the comparative advantage is moving away from syntax alone and toward problem choice, system design, specification quality, validation, and feedback loops.

For founders and intrapreneurs, this changes the game. If execution friction drops, then choosing the right mission matters more. Framing the right test matters more. Building systems that learn from feedback matters more. The people who do best may not be the people who use the most AI. They may be the people who can use AI without surrendering independent reasoning, state discipline, and strategic control.

What IQMindware is designed to train

That is why the right response is not simply "learn more AI tools." It is to train the layer above the tools.

For IQMindware, that means four things.

- Zone: stay in a workable state rather than oscillating between overload and flatness.

- Capacity: build the control needed to hold attention, resist drift, and think under load.

- Mindware: develop portable reasoning and decision scripts rather than relying on one-off prompts or vague intuition.

- The G-Loop: map, test, commit, and bank what survives real checks so that good thinking becomes more reusable and more transferable.

This is the bridge between AI literacy and durable cognition. The goal is not to reject AI. It is to use AI as extended cognition, not as a substitute for cognition. That is also why the IQMindware app stack is built around state, control, reasoning scripts, and transfer checks rather than around prompt tricks alone.

Bottom line for knowledge workers and founders

The practical thesis is simple.

- Use AI as extended cognition, not as a substitute for cognition.

- Train reasoning under load.

- Protect critical thinking from offloading drift.

- Build systems that compound judgement rather than erode it.

That is the deeper opportunity in the AI era. If AI lowers the friction of execution, comparative advantage shifts further toward choosing the right problem, setting the right tests, and building systems that improve with feedback rather than generating more noise. The real challenge for knowledge workers is not replacement alone. It is whether they can keep independent reasoning, resilience, and systems thinking intact while the tools get easier to use.

For claims boundaries on IQMindware's work, see /proof#claims. For a practical next step, read the protocol articles above and then explore the live tools.

Sources behind this article

- World Economic Forum, The Future of Jobs Report 2025

- Anthropic, Labor market impacts of AI: A new measure and early evidence

- Harvard Business Review, AI Doesn't Reduce Work-It Intensifies It

- Michael Gerlich, AI Tools in Society: Impacts on Cognitive Offloading and the Future of Critical Thinking

- OpenAI, Introducing the Codex app

- Original Substack article published March 9, 2026