The Science of Learning Curves: Overcoming Plateaus and the Fluency Trap

A science-backed guide to learning plateaus, piecewise skill acquisition, thin automation, and the illusion of competence.

We often think of learning and skill acquisition as being about one simple question: Am I improving?

But this can be the wrong take on your cognitive efforts.

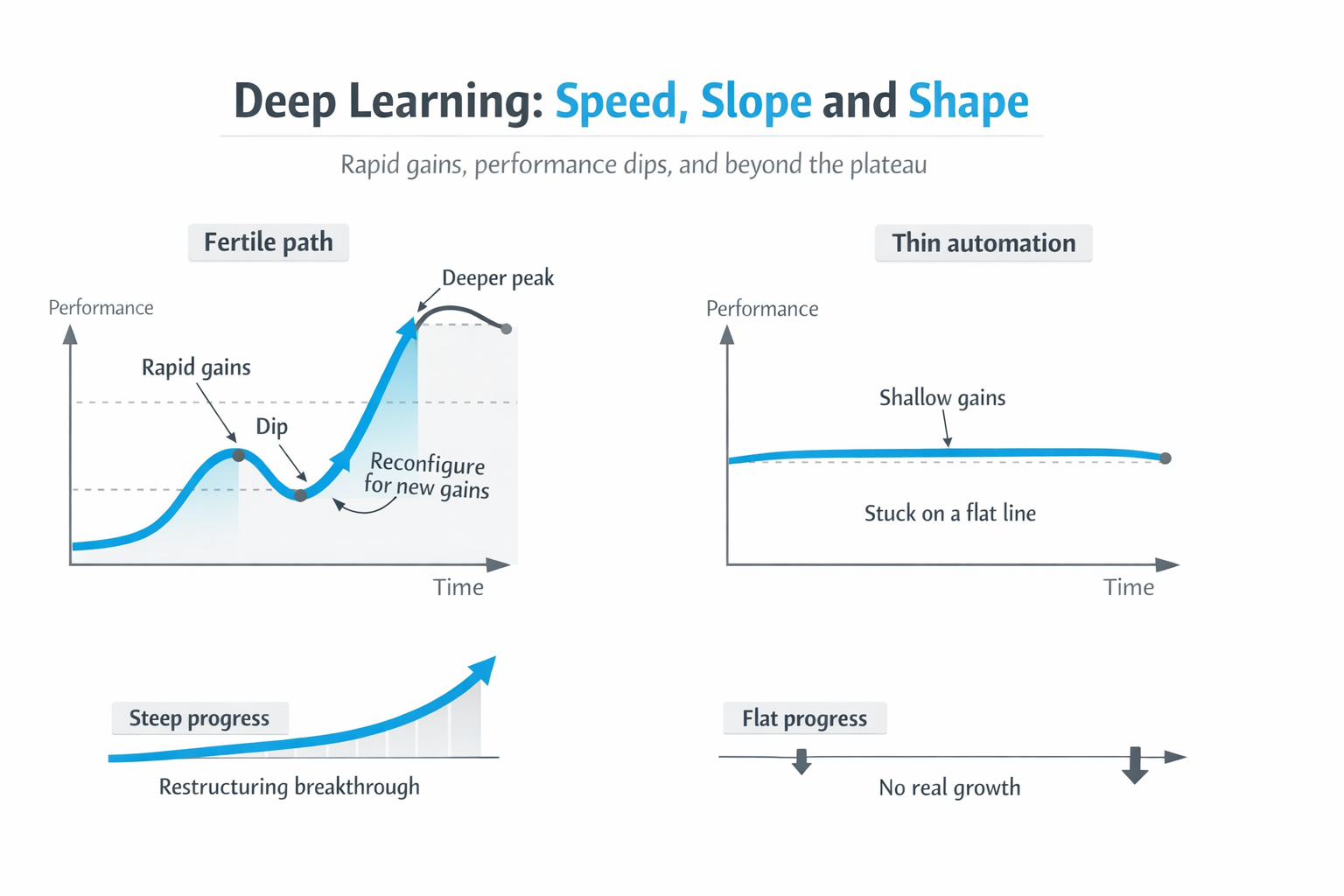

Often, the better question to ask is: What kind of learning curve am I on? Because not all improvement curves are equal. Some are fertile and productive. Some are shallow. Some give you a burst of rapid gains, then flatten, forcing a deeper cognitive restructuring. Others never really take off at all; they just let you become more polished, more efficient, and more trapped inside a skill set too narrow to change your life.

The speed matters. The slope matters. The shape matters. If you do not understand the psychology of your learning curve, you can easily give up at exactly the wrong moment or persist for years on exactly the wrong surface.

This on-site edition keeps the core argument intact while making the article easier to discover for readers searching for answers about learning plateaus, skill acquisition, and the illusion of competence.

The piecewise learning curve: why progress is not smooth

The classic power-law view of learning suggests that gains come quickly at first and then gradually slow down. However, researchers Donner and Hardy (2015) sharpened that picture. They demonstrated that individual learning curves are often better described not as one smooth power law, but as piecewise curves: periods of rapid local improvement within a strategy, punctuated by strategy shifts.

Crucially, these shifts often feature a brief performance drop at the transition before climbing again from a higher base. That is the key to genuine cognitive growth: fast descent, flattening, awkward restructuring, then a new climb.

This multi-timescale framework gives us two very different life scenarios.

Scenario one: the good bowl and learning plateaus

You change something important. You leave a job, adopt a new AI workflow, or stop trying to solve a problem with old mental machinery and start using a better cognitive representation.

Then something compelling happens: you make rapid progress. Dopamine is in full flow.

The new configuration gives you a steep early slope. Things click, and your effort suddenly converts into visible results. You are descending what we can call a fruitful local basin, or a good bowl. Once you have the right tools or mental models, exploitation starts paying off fast. This aligns with James March's broader argument regarding exploration vs exploitation: exploitation refines existing certainties more rapidly than exploration discovers new ones.

But good bowls do not go on forever.

The early slope flattens. The easy gains are harvested. What looked like a take-off starts to look like a learning plateau. Think of a foraging animal: at first, food is everywhere. But soon, the visible fruit has been picked clean. The rate of return changes.

Stalling, the dip, and how to overcome a learning plateau

This pivot point is where many people make the wrong call. They think hitting a learning plateau means the whole strategy has failed. Often, it means you have simply reached the bottom of the current bowl.

At that point, there are only two real options:

- Give up and retreat to your old configuration.

- Recognize the first wave of gains, and tolerate a period of temporary disorganization while you reconfigure for a deeper descent.

This is where the dip appears, and where people often misread the signal. A drop in performance after a transition is not necessarily evidence that you are doing something wrong. Donner and Hardy found that later curve segments typically outperformed earlier ones after a brief drop. The system gets worse before it gets better because one strategy is being destabilized before a better one becomes fluent.

If you want to know how to overcome a learning plateau, the answer is often not to grind harder inside the same strategy. It is to detect whether the plateau is the bottom of a fertile bowl or the ceiling of a shallow one.

Scenario two: the wrong hill and thin automation

Now consider the opposite case. You are working hard, polishing techniques, and smoothing your workflow. On paper, this looks like skill acquisition.

But there is no real take-off. There is no steep early slope suggesting you have found a genuinely fertile basin. Instead, the gains are thin, local, and quickly absorbed by the same narrow structure you were already trapped inside. You are just becoming more efficient on the wrong hill.

I call this Thin Automation.

Thin automation happens when learning is real, but too shallow to be transformative. You get better at the wrapper, the surface, not at the deeper cognitive structure. Your adaptive range does not expand. The gains do not travel across contexts, and they do not produce the portable competence that makes you feel like you are genuinely growing.

In Trident-G terms, this is the difference between structural growth and context-bound success. A shallow path rewards local performance. You get better, but not broader. Sharper, but not deeper. You optimize, but you do not evolve.

This is also why many readers interested in training strategies or far transfer end up asking the same question: are the gains actually traveling, or are they just becoming smoother inside one narrow setup?

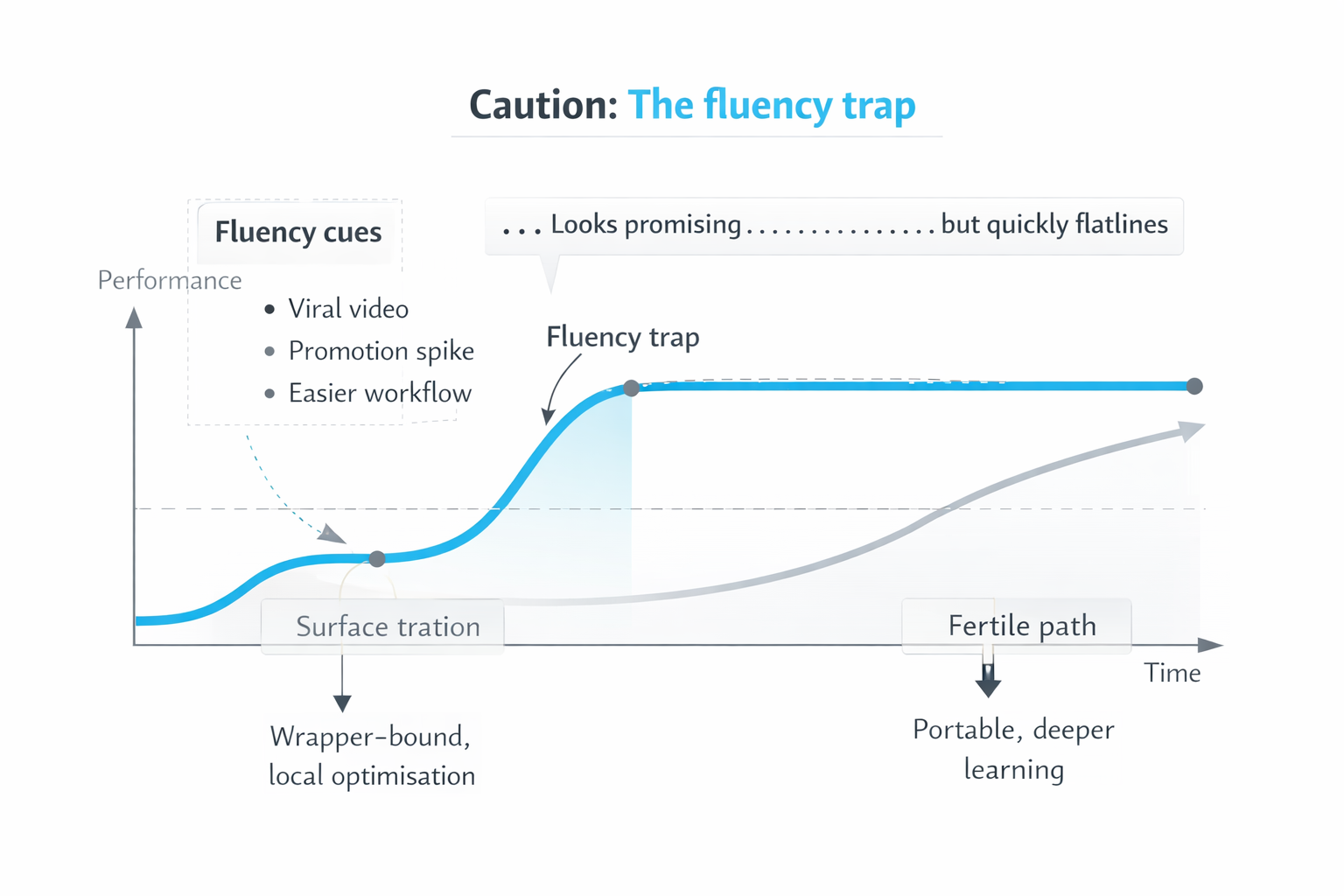

Caution: the fluency trap and the illusion of competence

There is one important complication: not every early surge means you have found a good bowl.

Sometimes, what feels like rapid progress is merely fluency: the ease, smoothness, or social reward of a configuration that looks promising but is not actually producing deep learning. A viral video or a brilliantly efficient new workflow can create the impression of being on the right path, only to flatten almost immediately.

The learning literature is clear on this point. People are routinely misled by fluency cues. In cognitive psychology, this is known as the illusion of competence (Koriat and Bjork, 2005). Ease, familiarity, and coherence can be mistaken for genuine mastery. In one study, students rated a more fluent instructor as better and predicted more learning, even though actual learning did not improve (Carpenter et al., 2013). What feels smooth is not always what transfers.

The ultimate test of cognitive growth

Rate of improvement alone is not enough. The real test is whether your gains travel:

- Do they survive a change of context?

- Do they hold up under pressure?

- Do they deepen your adaptive range, or merely make you polished inside one narrow setup?

We can easily be misled into mistaking surface traction for structural growth. Stop optimizing the mud-bank of thin automation, and start seeking the deep, structural learning curves that carry real expansion.

If you want a related IQMindware frame for why some insights never become reusable skill, read Fluid and Crystallized Intelligence. For claims boundaries on cognitive training and transfer, see the claims ladder.

Sources behind this article

- Carpenter, S. K., Wilford, M. M., Kornell, N., & Mullaney, K. M. (2013). Appearances can be deceiving: Instructor fluency increases perceptions of learning without increasing actual learning.

- Donner, Y., & Hardy, J. L. (2015). Piecewise power laws in individual learning curves.

- Durstewitz, D., Vittoz, N. M., Floresco, S. B., & Seamans, J. K. (2010). Abrupt transitions between prefrontal neural ensemble states accompany behavioral transitions during rule learning.

- Koriat, A., & Bjork, R. A. (2005). Illusions of competence in monitoring one's knowledge during study.

- March, J. G. (1991). Exploration and exploitation in organizational learning.

- Nassar, M. R., Wilson, R. C., Heasly, B., & Gold, J. I. (2010). An approximately Bayesian delta-rule model explains the dynamics of belief updating in a changing environment.

- Smith, M. A., Ghazizadeh, A., & Shadmehr, R. (2006). Interacting adaptive processes with different timescales underlie short-term motor learning.

- Zhang, X.-Y., & Tang, C. (2025). Heavy-tailed update distributions arise from information-driven self-organization in nonequilibrium learning.